Biography

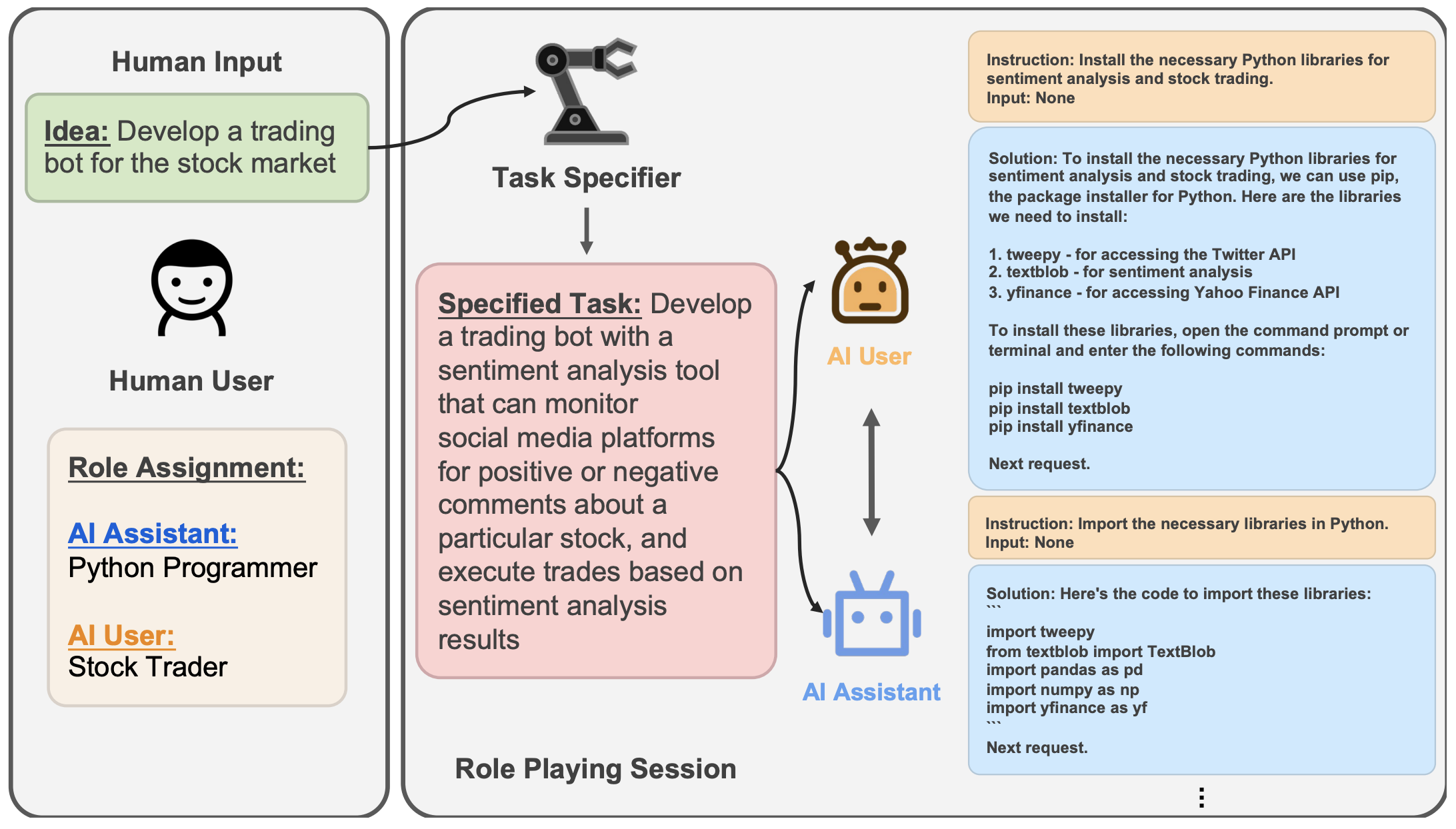

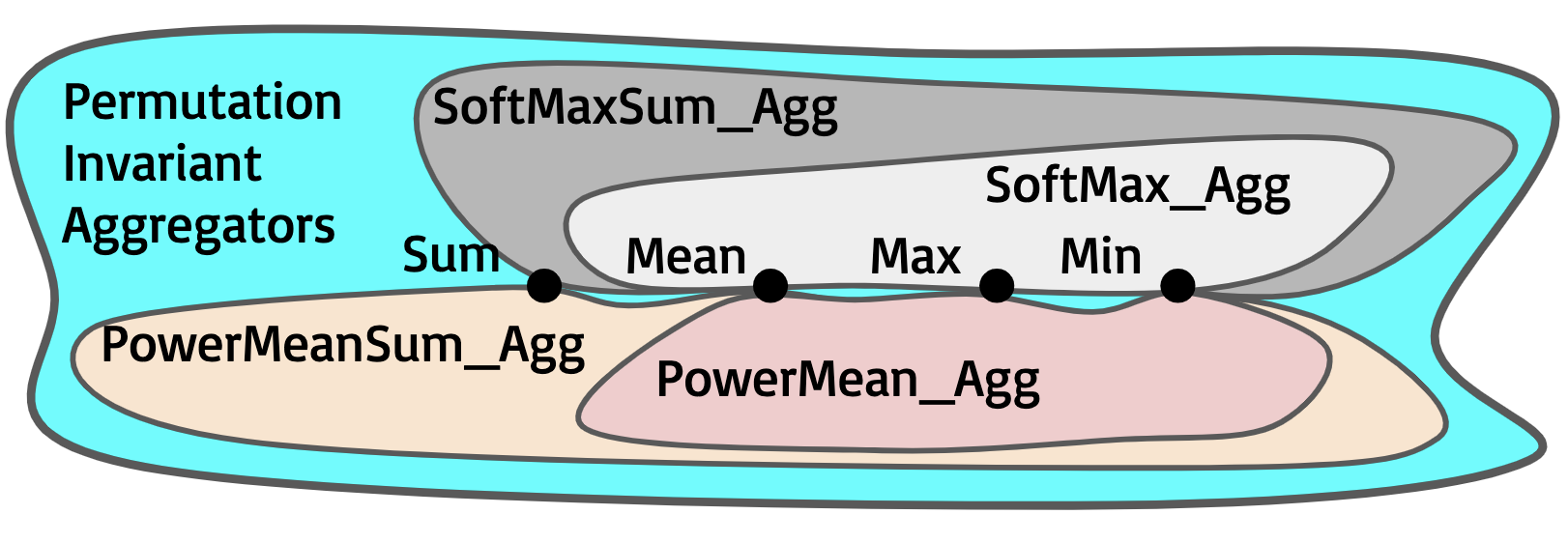

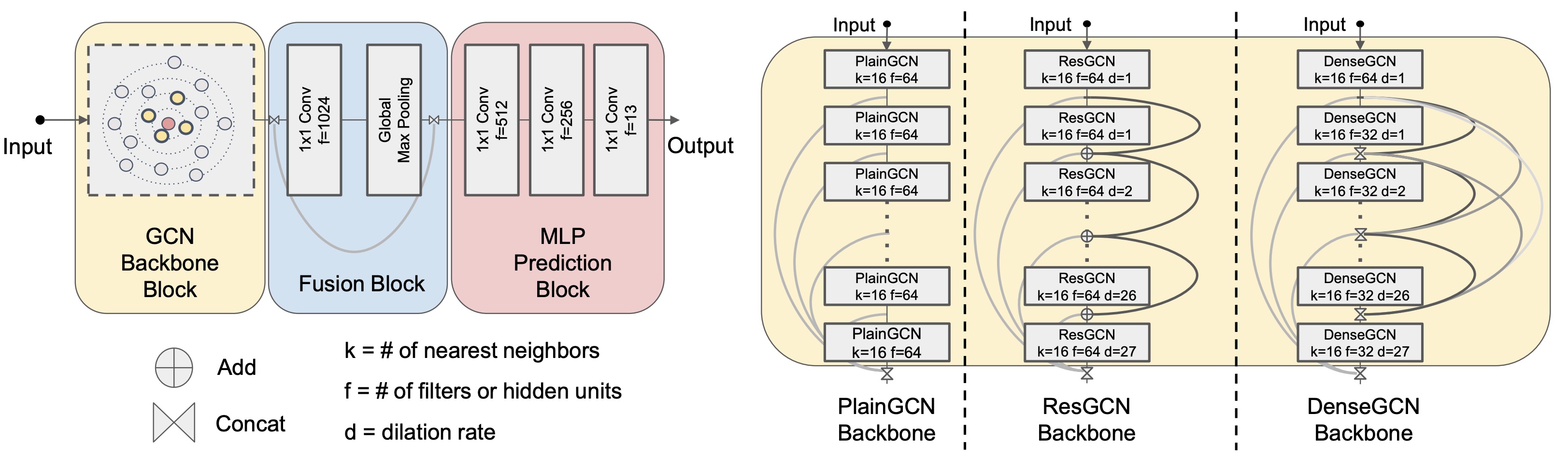

Guohao Li is an artificial intelligence researcher and an open-source contributor working on building intelligent agents that can perceive, learn, communicate, reason, and act. He is the core lead of the open source projects CAMEL-AI.org and DeepGCNs.org.

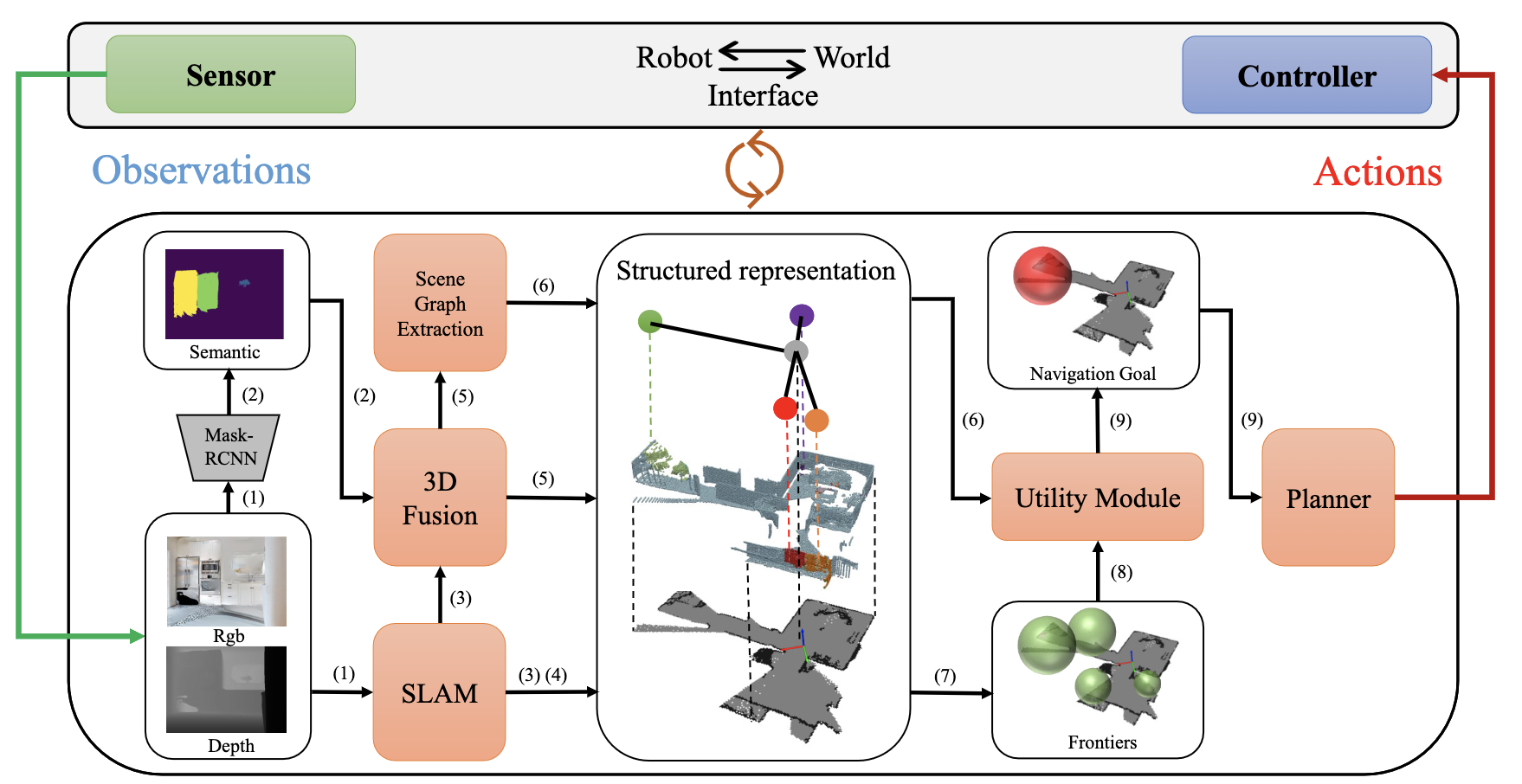

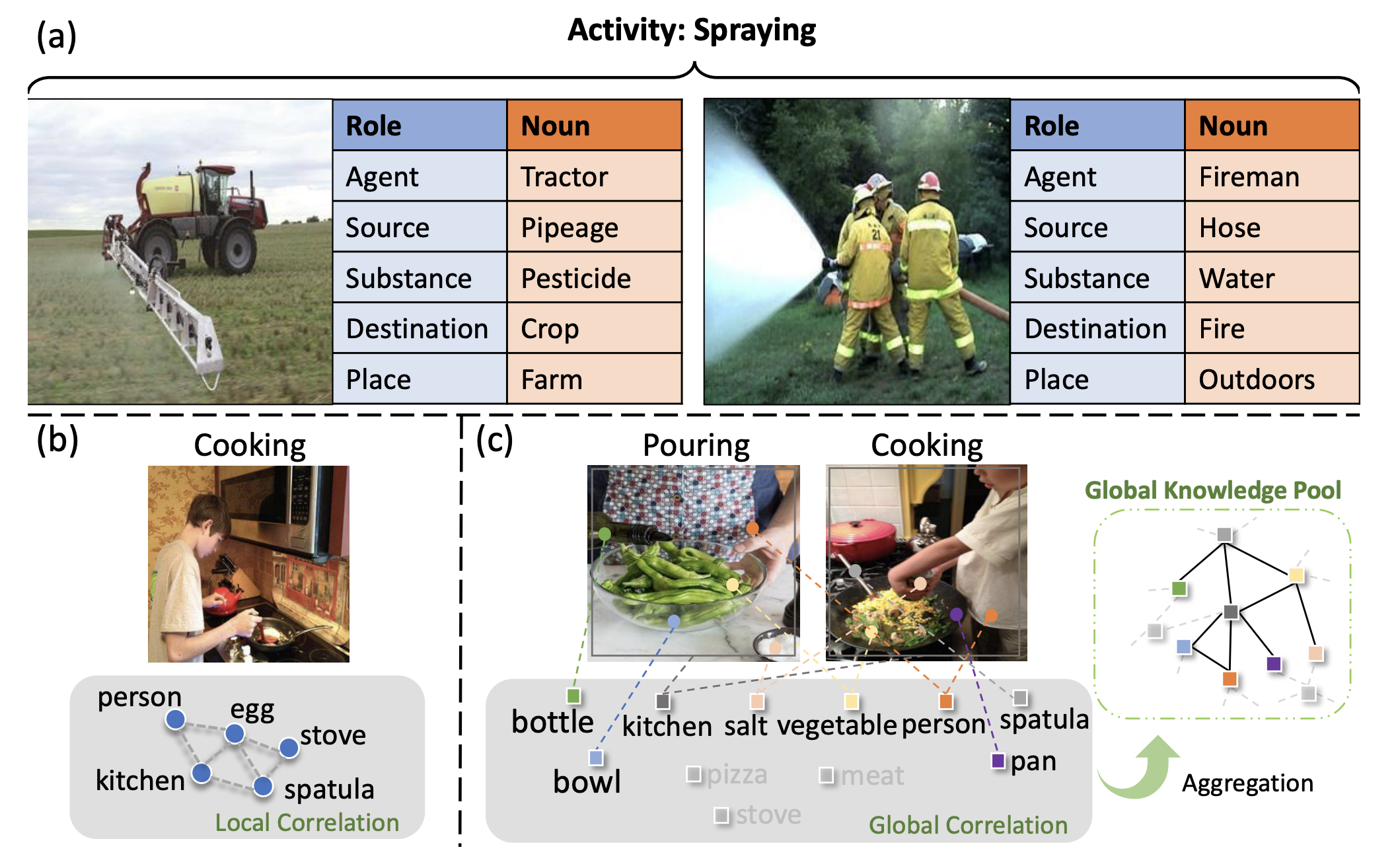

Guohao Li was a postdoctoral researcher at University of Oxford with Prof. Philip Torr. He obtained his PhD degree in Computer Science at King Abdullah University of Science and Technology (KAUST) advised by Prof. Bernard Ghanem. During his Ph.D. studies, he was fortunate to work at Intel ISL with Dr. Vladlen Koltun and Dr. Matthias Müller as a research intern. He visited ETHz CVL as a visiting researcher. He also worked at Kumo AI and PyG.org with Prof. Jure Leskovec and Dr. Matthias Fey as a PhD intern. His primary research interests include Autonomous Agents, Graph Machine Learning, Computer Vision, and Embodied AI. He has published related papers in top-tier conferences and journals such as ICCV, CVPR, ICML, NeurIPS, RSS, 3DV, and TPAMI.

We are actively looking for self-motivated research and engineering interns and open-source contributors at CAMEL-AI.org. Feel free to reach out via email guohao.li@eigent.ai if interested.

Interests

- Autonomous Agents

- Graph Machine Learning

- Computer Vision

- Embodied AI

Education

PhD in CS, 2018-2022

King Abdullah University of Science and Technology

MSE in EE, 2015-2018

University of Chinese Academy of Science

Joint MS in CS, 2015-2018

ShanghaiTech University

BE in EE, 2011-2015

Harbin Institute of Technology

University of Oxford

University of Oxford Kumo AI

Kumo AI ETH Zurich

ETH Zurich Intel Intelligent Systems Lab

Intel Intelligent Systems Lab